|

In this last critical single pixel eye experiment,

we will demonstrate a key survival behavior of an organism such

as a primitive early sea worm which had but one eye spot on the

front of its feeding end. Remember that in the biological world,

photo sensitive spots can be thought of as neurons that have

migrated to the surface of the head and have an enhanced photo

sensitive functionality. Two reasons were key to understanding

this early behavior, first demonstrated in the earliest multicellular

organisms nearly a billion years ago. First, the day night feeding

cycle was easier to detect. In the day, some organisms would

hide from predators and the relentless UV radiation from the

raw sun. Second, it was an escape mechanism, such that when the

organism either buried itself in the mud or found a dark alcove

it could escape from predators. The detection of light enabled

such behaviors to take place, and have equal benefits for robots

as well.

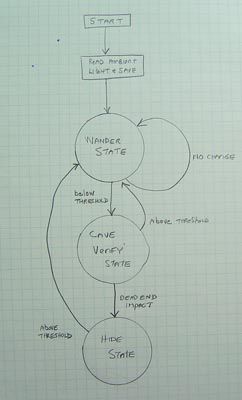

Programming

The VLR was programmed

with a simple three state Finite State Machine. The three states

- Random wander, Cave Verify, and Hide states enables the robot

to react instantly to its environment, and find a dark alcove

to take residence in. This advances the visual behavioral intelligence

to the next level, that of a simple multicellular primitive worm.

Here is a description of the program defining its Artificial

Intelligence in detail.

CLICK to enlarge

CLICK to enlarge

|

The Finite

State Machine

START - In the

biological realm, this would be the birth of the organism. Here,

we represent this with the turning on of the power switch to

the robot.

READ AMBIENT AND

SAVE - Billions of years of evolution will set this threshold

level for what can be called bright and dark for an organism.

But here, since the robot does not have millennia to evolve,

we have the robot take a brightness reading upon power up. This

we set as the daytime or full brightness level. Anytime the robot

sees a drop from this value, is a potential cave.

RANDOM WANDER STATE

- the organism

will during the daytime exhibit a feeding pattern, be it random,

S shaped or spirals. This maximizes the ground coverage for maximum

food intake. Here, the robot implements a random wander mode,

with full bumper impact avoidance, just like any other living

creature would do.

|

CAVE VERIFY STATE - If the brightness drops

based on the pre programmed neurons in the worms brain to below

what it calls bright, then it must be getting dark and may be

the entrance to a possible cave or the sediment is starting to

bury the worm for cover. In the robot, if the brightness drops

to less than 10 percent of full, then we may have a cave we have

entered.

To verify , the

robot drives forward until its bumper hits a wall. Then IF its

still dark then the cave is verified.

HIDE STATE - Once the worm or robot

verifies that it is indeed in a dark alcove or buried. it halts.

and hides. This extreme survival measure is both life saving

in the case of the worm, and for the robot it now knows its out

of the way and parked in a safe place. Rip the cover off either

organism and expose it to the light and it will go back into

wander mode and search for a new hiding place.

MOVIES

Two short low res

MPG movies are now provided here. The first, The robot is turned

on, wanders against the side of the arena bounces off and then

finds the cave (an inverted box). Since the robot is talking

turn up your volume to hear what it is saying. The second movie

clip shows the same cave hiding behavior but the camera is riding

ON THE ROBOT. Just for fun...

(Voice Transcript:

"Now Booting Up", "I am the Vision Logic Robot".

"Found Possible Cave", "I am in the Cave Now".....)

Movie 1

Movie 2

Practical application

I don't know about

you, but stepping on a robot in the dark at night can be a crippling

experience for both you and the robot. By parking the robot in

a save place such as a charging hut or under the coffee table

is certainly safer than the robot weathering the night out in

the open. A robot that is charged by solar cells can also benefit

from this type of program. By reversing the thresholds in the

cave verify state, we can have the robot look for a bright spot

in the sun in the daytime for charging.

Conclusions of

one pixel eye experiments.

In this last series

of experiments and demonstrations, we have proven that it is

certainly feasible to show the visual AI behavior of a simple

organism such as a protozoan, worm or single celled animal. From

these humble beginnings, we hope to move on to more advanced

vision experiments, starting next with two eyes photocell like

non imaging configuration. Stay tuned!

|

HOME